Task04:机器翻译

机器翻译

作者:like alone

机器翻译(MT):将一段文本从一种语言自动翻译为另一种语言,用神经网络解决这个问题通常称为神经机器翻译(NMT)。

主要特征:输出是单词序列而不是单个单词。 输出序列的长度可能与源序列的长度不同。

import os

os.listdir('/home/kesci/input/')

import sys

sys.path.append('/home/kesci/input/d2l9528/')

import collections

import d2l

import zipfile

from d2l.data.base import Vocab

import time

import torch

import torch.nn as nn

import torch.nn.functional as F

from torch.utils import data

from torch import optim

#数据预处理

with open('/home/kesci/input/fraeng6506/fra.txt', 'r') as f:

raw_text = f.read()

print(raw_text[0:1000])

def preprocess_raw(text):

text = text.replace('\u202f', ' ').replace('\xa0', ' ')

out = ''

for i, char in enumerate(text.lower()):

if char in (',', '!', '.') and i > 0 and text[i-1] != ' ':

out += ' '

out += char

return out

text = preprocess_raw(raw_text)

print(text[0:1000])

#分词

num_examples = 50000

source, target = [], []

for i, line in enumerate(text.split('\n')):

if i > num_examples:

break

parts = line.split('\t')

if len(parts) >= 2:

source.append(parts[0].split(' '))

target.append(parts[1].split(' '))

source[0:3], target[0:3]

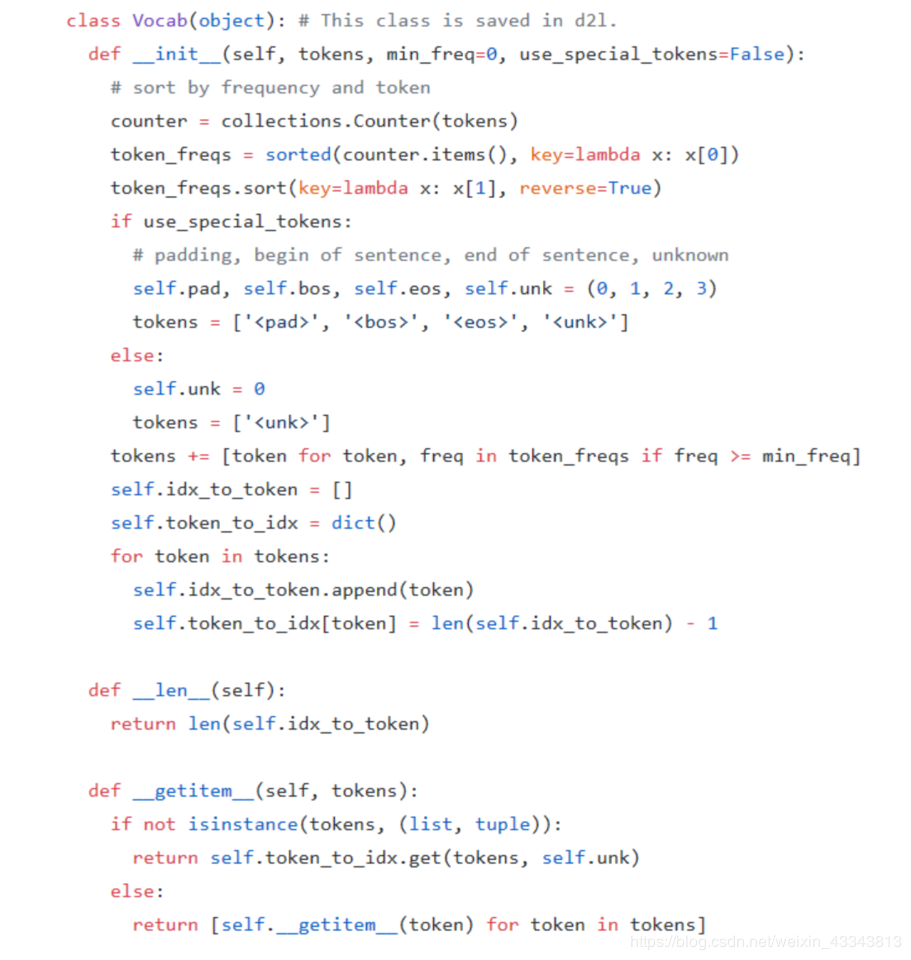

#建立词典,Vocab类在下面

def build_vocab(tokens):

tokens = [token for line in tokens for token in line]

return d2l.data.base.Vocab(tokens, min_freq=3, use_special_tokens=True)

src_vocab = build_vocab(source)

len(src_vocab)

#载入数据集

def pad(line, max_len, padding_token):

if len(line) > max_len:

return line[:max_len]

return line + [padding_token] * (max_len - len(line))

pad(src_vocab[source[0]], 10, src_vocab.pad)

def build_array(lines, vocab, max_len, is_source):

lines = [vocab[line] for line in lines]

if not is_source:

lines = [[vocab.bos] + line + [vocab.eos] for line in lines]

array = torch.tensor([pad(line, max_len, vocab.pad) for line in lines])

valid_len = (array != vocab.pad).sum(1) #第一个维度

return array, valid_len

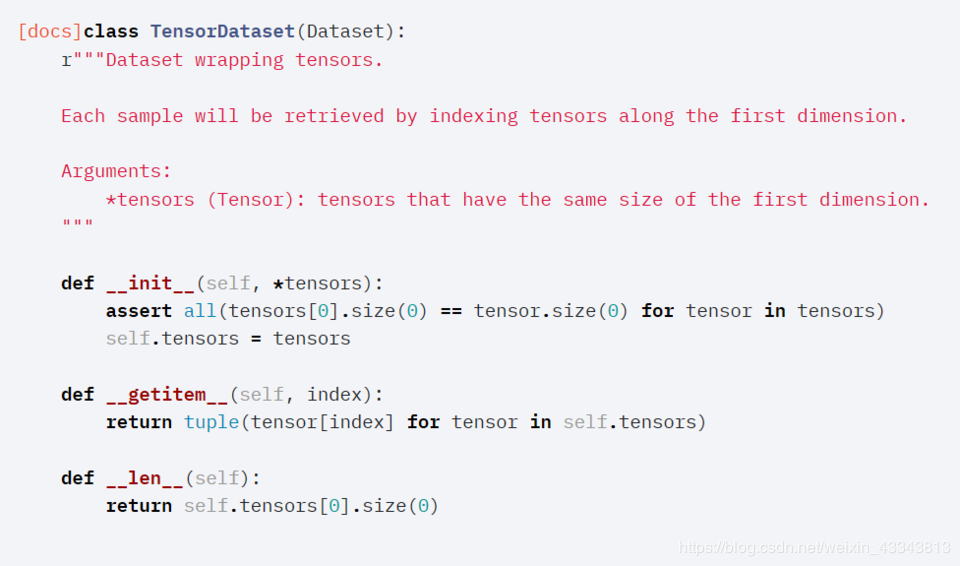

def load_data_nmt(batch_size, max_len): # This function is saved in d2l.

src_vocab, tgt_vocab = build_vocab(source), build_vocab(target)

src_array, src_valid_len = build_array(source, src_vocab, max_len, True)

tgt_array, tgt_valid_len = build_array(target, tgt_vocab, max_len, False)

train_data = data.TensorDataset(src_array, src_valid_len, tgt_array, tgt_valid_len)

train_iter = data.DataLoader(train_data, batch_size, shuffle=True)

return src_vocab, tgt_vocab, train_iter

src_vocab, tgt_vocab, train_iter = load_data_nmt(batch_size=2, max_len=8)

for X, X_valid_len, Y, Y_valid_len, in train_iter:

print('X =', X.type(torch.int32), '\nValid lengths for X =', X_valid_len,

'\nY =', Y.type(torch.int32), '\nValid lengths for Y =', Y_valid_len)

break

作者:like alone