吴恩达课后作业——具有神经网络思维的Logistic回归

目录0、导入包1、数据预处理2、前向传播——计算梯度和损失3、优化函数——梯度下降4、预测函数5、Logistic回归in神经网络6、main函数7、运行结果8、补充

0、导入包

h5py:因为数据集是H5类型的文件,需要导入包使用;

matplotlib:用于在Python中绘制图表,类似于matlab中的画图。

作者:qq_41893638

import numpy as np

import matplotlib.pyplot as plt

import h5py

1、数据预处理

将数据处理成自己方便的格式,其中:

我把输入变成了【特征样本】格式,其中特征个数为6464*3,rgb 把数据从0到255变成了0到1范围内,方便之后的运算def loadDataset():

train_data = h5py.File('../data/train_catvnoncat.h5', 'r')

test_data = h5py.File('../data/test_catvnoncat.h5', 'r')

# 209x64x64x3,209张rgb的图片

train_set_x = np.array(train_data["train_set_x"]) # obtain train set features

# 50x64x64x3,50张rgb的图片

test_set_x = np.array(test_data["test_set_x"]) # obtain test set features

# 为了之后方便操作,记得把一维数组变成矩阵

# 01矩阵,0表示不是猫,1表示是猫

train_set_y = np.array(train_data["train_set_y"]).reshape(1, -1) # obtain train set features

test_set_y = np.array(test_data["test_set_y"]).reshape(1, -1) # obtain test set features

classes = np.array(test_data["list_classes"]).reshape(1, -1) # the list of classes

# #To show the image

# index = 20

# plt.imshow(train_set_x[index])

# plt.show()

# reshape中第一个参数表示指定形状为图片张数,-1表示不管它的维度

train_set_x_flatten = train_set_x.reshape(train_set_x.shape[0], -1).T

test_set_x_flatten = test_set_x.reshape(test_set_x.shape[0], -1).T

# 数据归一化

train_set_x = train_set_x_flatten / 255

test_set_x = test_set_x_flatten / 255

return train_set_x, train_set_y, test_set_x, test_set_y, classes

2、前向传播——计算梯度和损失

主要是根据吴恩达深度学习中推出的公式进行梯度

sigmoid函数自定义,一句代码搞定 用字典的方式返回了梯度,方便之后的查询def sigmoid(z):

return 1 / (1 + np.exp(-z))

def propagate(w, b, X, Y):

# 获取样本数

m = X.shape[1]

# 一步计算函数值

A = sigmoid(np.dot(w.T, X) + b)

# 计算损失

cost = (-1 / m) * np.sum(Y * np.log(A) + (1 - Y) * (np.log(1 - A)))

# 根据公式计算梯度

dw = (1 / m) * np.dot(X, (A - Y).T)

db = (1 / m) * np.sum(A - Y)

## 去掉维度为1的

#cost = np.squeeze(cost)

# 生成字典,便于查找

grads = {"dw": dw, "db": db}

return grads, cost

3、优化函数——梯度下降

这个函数就比较简单了,通过调用前向传播函数,返回梯度,利用学习率再更新梯度。

每次迭代一次就更新一次梯度,其实也是遍历一次训练集。这样的迭代适合小数据集。神经网络一般用批梯度下降法

def optimize(w, b, X, Y, iter, learning_rate):

costs = []

# 开始迭代,这里的迭代其实对于于神经网络的epoch

for i in range(iter):

grads, cost = propagate(w, b, X, Y)

dw = grads["dw"]

db = grads["db"]

w = w - learning_rate * dw

b = b - learning_rate * db

if i % 100 == 0:

costs.append(cost)

print("迭代次数:{},误差值:{:f}".format(i, cost))

params = {"w": w, "b": b}

grads = {"dw": dw, "db": db}

return params, grads, costs

4、预测函数

用已经训练好的参数对X进行预测,然后通过列表解析式算出预测值0|1

def predict(w, b, X):

A = sigmoid(np.dot(w.T, X) + b)

# A一定要变成一列才能使用列表解析式哦

Y_prediction = [ 1 if item>0.5 else 0 for item in A.T ]

return Y_prediction

5、Logistic回归in神经网络

该函数,主要对之前的函数进行调用,完成回归任务,并输出最后的准确率

def LogisticInNeural(X_train, Y_train, X_test, Y_test, iter, learning_rate=0.5, ):

# 初始化参数

w = np.zeros([X_train.shape[0], 1])

b = 0

parameters, grads, costs = optimize(w, b, X_train, Y_train, iter, learning_rate)

w, b = parameters["w"], parameters["b"]

Y_prediction_test = predict(w, b, X_test)

Y_prediction_train = predict(w, b, X_train)

accuracy_train = np.sum(Y_prediction_train == Y_train) / (Y_train).shape[1]

accuracy_test = np.sum(Y_prediction_test == Y_test) / Y_test.shape[1]

print(accuracy_train)

print(accuracy_test)

d = {

"costs": costs,

"Y_prediction_test": Y_prediction_test,

"Y_prediciton_train": Y_prediction_train,

"w": w,

"b": b,

"learning_rate": learning_rate,

"num_iterations": iter}

return d

6、main函数

主要作用:

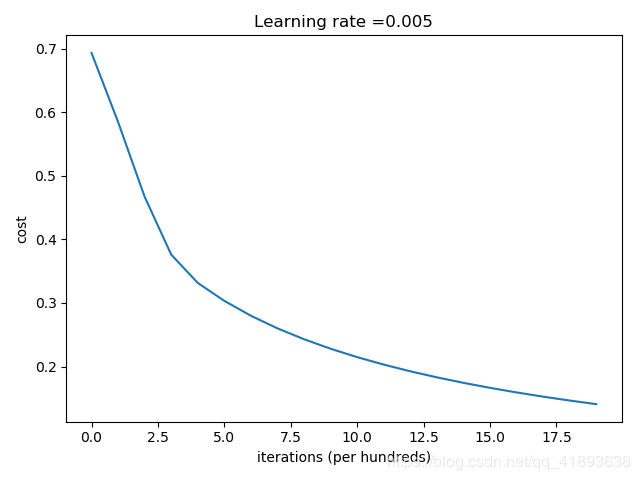

获取数据集 调用logistic回归 画出cost与迭代次数的图像def main():

train_set_x, train_set_y, test_set_x, test_set_y, classes = loadDataset()

print("====================测试model====================")

d = LogisticInNeural(train_set_x, train_set_y, test_set_x, test_set_y, iter=2000, learning_rate=0.005)

# 绘制图

costs = d['costs']

plt.plot(costs)

plt.ylabel('cost')

plt.xlabel('iterations (per hundreds)')

plt.title("Learning rate =" + str(d["learning_rate"]))

plt.show()

7、运行结果

迭代次数:0,误差值:0.693147

迭代次数:100,误差值:0.584508

迭代次数:200,误差值:0.466949

迭代次数:300,误差值:0.376007

迭代次数:400,误差值:0.331463

迭代次数:500,误差值:0.303273

迭代次数:600,误差值:0.279880

迭代次数:700,误差值:0.260042

迭代次数:800,误差值:0.242941

迭代次数:900,误差值:0.228004

迭代次数:1000,误差值:0.214820

迭代次数:1100,误差值:0.203078

迭代次数:1200,误差值:0.192544

迭代次数:1300,误差值:0.183033

迭代次数:1400,误差值:0.174399

迭代次数:1500,误差值:0.166521

迭代次数:1600,误差值:0.159305

迭代次数:1700,误差值:0.152667

迭代次数:1800,误差值:0.146542

迭代次数:1900,误差值:0.140872

0.9904306220095693

0.7

大体很好地完成了本次作业,但可以多试试学习率的变化,学习率的变化会导致最终结果的不同。

本文主要参考文章:https://blog.csdn.net/u013733326/article/details/79639509

作者:qq_41893638