第八章 变量选择与正则化 - 岭回归分析

岭回归分析0 载入库1 数据预处理2 普通线性回归和岭回归2.1 最小二乘法,参数估计2.2 岭回归,参数估计,固定岭参数2.3 岭回归,按 CV 标准自动选择岭参数2.4 列举岭参数的值,计算回归参数,画出岭迹图,计算 VIF

0 载入库

作者:喝醉酒的小白

载入 sklearn 模块中的线性回归与岭回归的函数

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

np.set_printoptions(suppress=True) #不用科学计数法输出

from sklearn.linear_model import LinearRegression

from sklearn.linear_model import Ridge

from sklearn.linear_model import RidgeCV

1 数据预处理

将自变量和因变量中心化和标准化

mydata=pd.read_csv('Regression/Regression8/longley.csv')

mydata_normd = (mydata - mydata.mean()) / mydata.std()

A = np.asmatrix(mydata_normd) #将输入解释为矩阵

X = A[:,1:]

y = A[:,0]

2 普通线性回归和岭回归

2.1 最小二乘法,参数估计

reg01 = LinearRegression()

reg01.fit(X,y)

print('OLS score:', reg01.score(X,y).round(4))

print('OLS coefficients:', reg01.coef_.round(3))

![]()

reg02 = Ridge(alpha=0.016)

reg02.fit(X,y)

print('Ridge(alpha=0.016) score:', reg01.score(X,y).round(4))

print('Ridge(alpha=0.016) coefficients:', reg02.coef_.round(3))

![]()

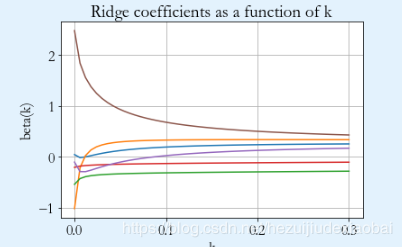

岭回归,给定一些岭参数,画出岭迹图

alphas = np.linspace(0,0.3,51)

betas = np.zeros((51,6))

for i in range(51):

reg03 = Ridge(alpha=alphas[i])

reg03.fit(X,y)

betas[i] = reg03.coef_

ax = plt.gca()

ax.plot(alphas, betas)

plt.xlabel('k')

plt.ylabel('beta(k)')

plt.title('Ridge coefficients as a function of k')

plt.grid(True)

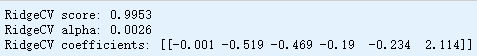

alphas = np.linspace(0.0001,0.1,1000)

reg04 = RidgeCV(alphas)

reg04.fit(X,y)

print('RidgeCV score:', reg04.score(X,y).round(4))

print('RidgeCV alpha:', reg04.alpha_)

print('RidgeCV coefficients:', reg04.coef_.round(3))

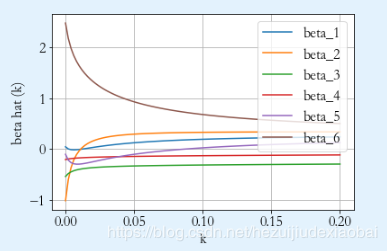

B = np.dot(X.T,X)

E6 = np.diag(np.ones(6))

Nk = 101

k = np.linspace(0,0.2,Nk)

beta = np.zeros((Nk,6))

for i in range(Nk):

Binv = np.linalg.inv(B + k[i] * E6)

beta[i] = np.dot(np.dot(Binv, X.T), y).T

画图模型整段运行

for i in range(6):

plt.plot(k, beta[:,i] ,'-', label='beta_{}'.format(i+1))

plt.legend(loc='upper right')

plt.grid(True)

plt.xlabel('k')

plt.ylabel('beta hat (k)')

计算VIF

VIF=np.zeros((Nk,6))

for i in range(Nk):

Binv=np.linalg.inv(B+k[i]*E6)

C=np.dot(np.dot(Binv,B),Binv)

VIF[i]=np.diag(C)

VIF

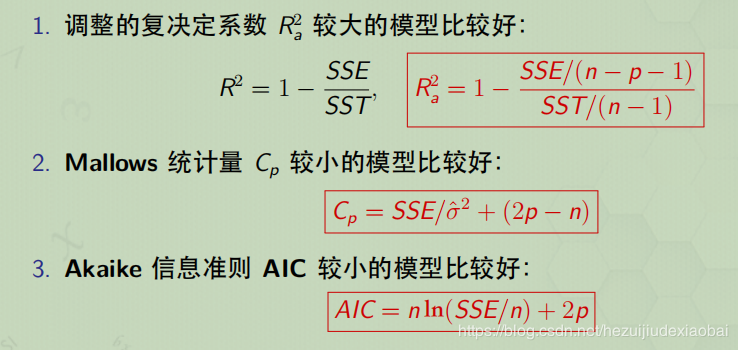

评价回归方程的准则

作者:喝醉酒的小白