【华盛顿大学-机器学习】1、A Case Study 1.3、clustering:文献数据检索

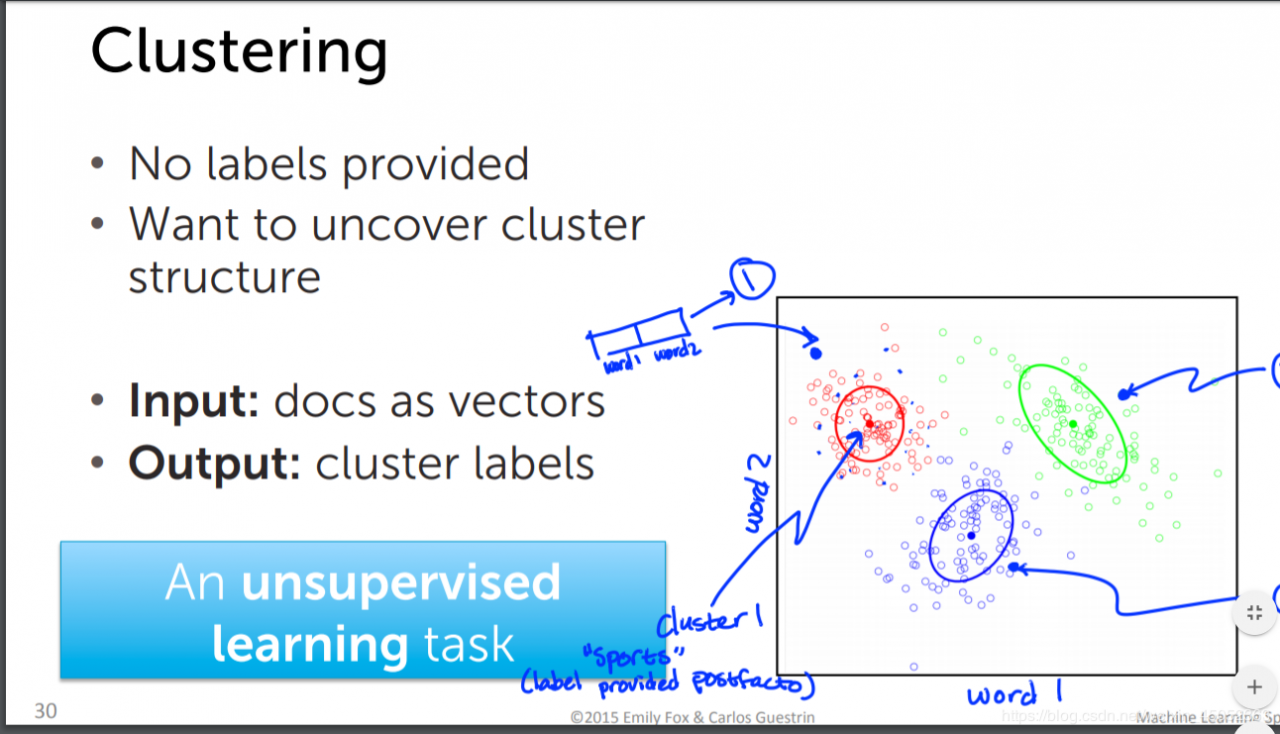

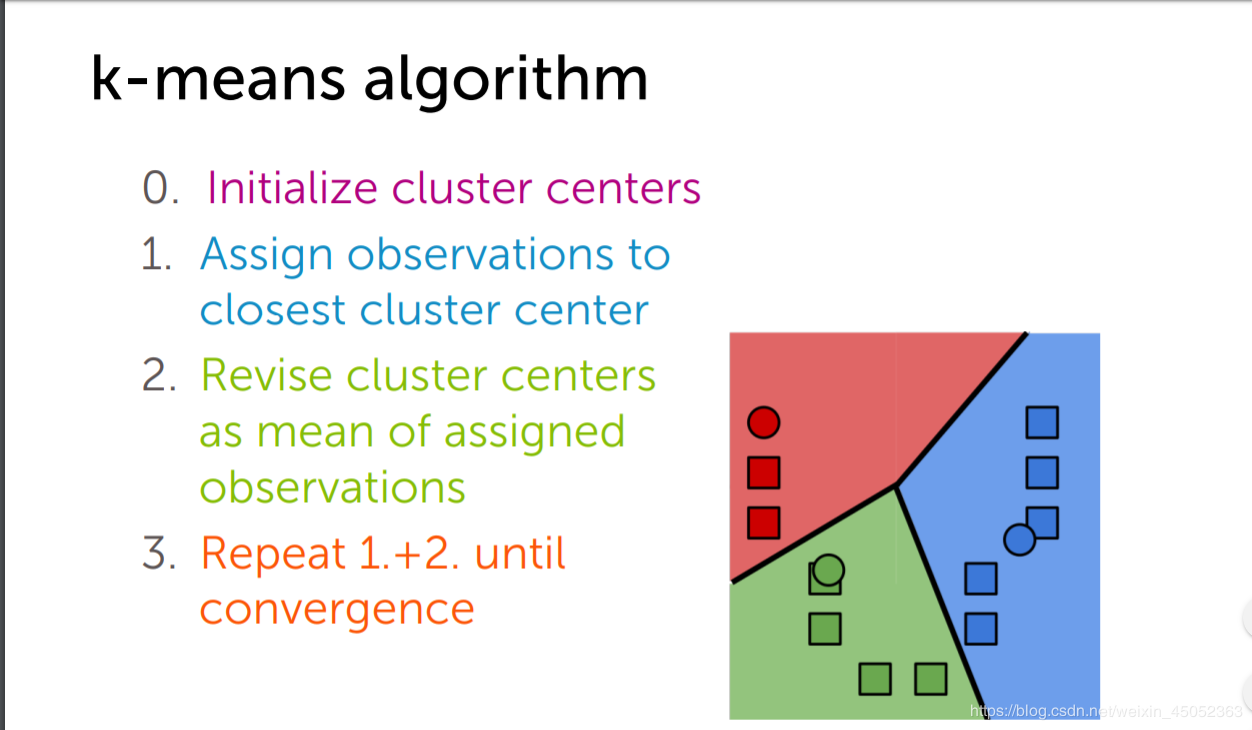

clustering

对文献进行数据分析

聚类算法实现

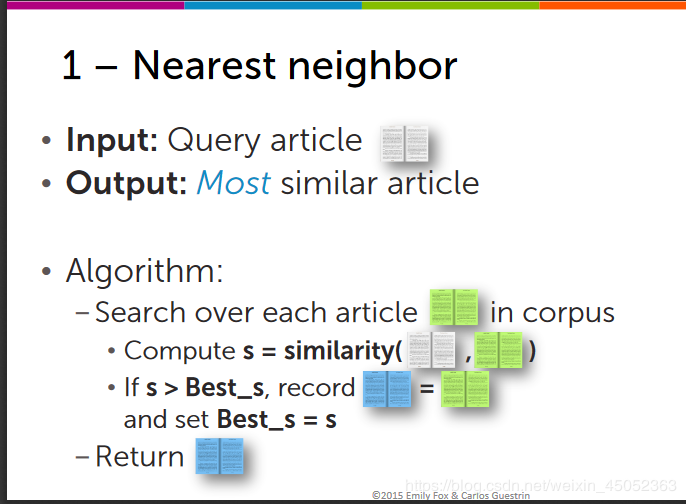

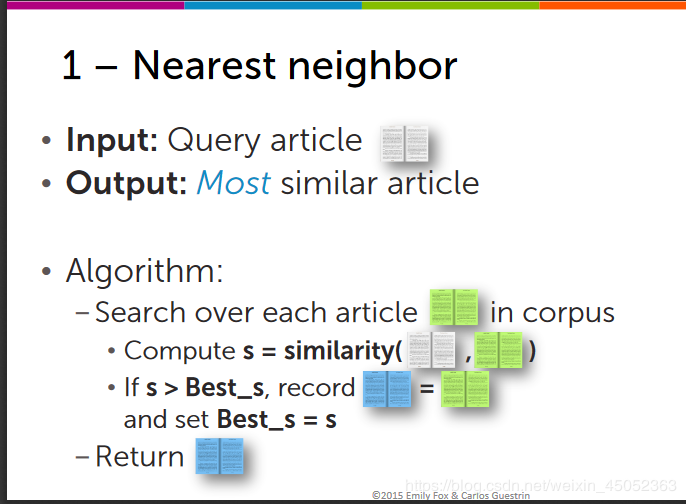

Nearest neighbor search(最近邻搜索)

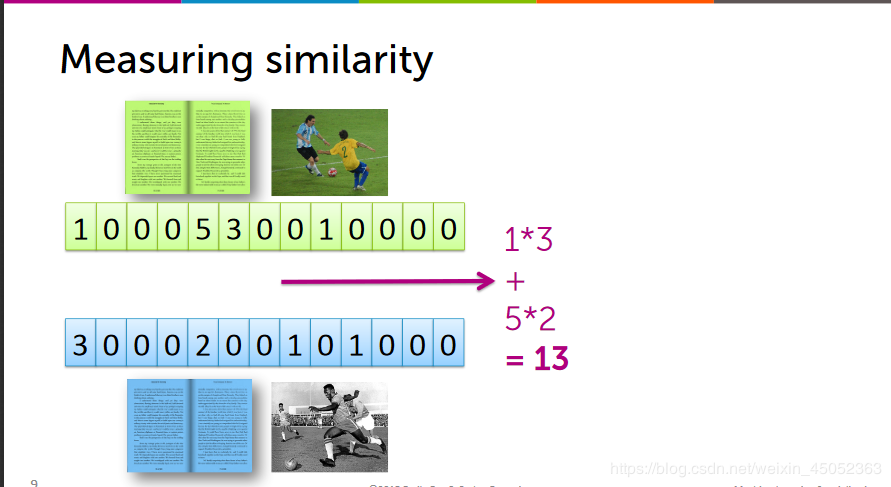

将其他的文献与目标文献进行上面的矩阵相乘,找到最近的那个

聚类算法实现

Nearest neighbor search(最近邻搜索)

将其他的文献与目标文献进行上面的矩阵相乘,找到最近的那个

K-Nearest neighbor(KNN)

找出k个最相近的文章

K-Nearest neighbor(KNN)

找出k个最相近的文章

clustering

clustering

作者:weixin_45052363

要求如下

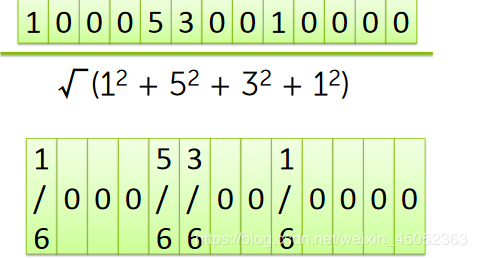

上述方法会受到倍数的影响,因此我们要将其标准化

聚类算法实现

Nearest neighbor search(最近邻搜索)

将其他的文献与目标文献进行上面的矩阵相乘,找到最近的那个

聚类算法实现

Nearest neighbor search(最近邻搜索)

将其他的文献与目标文献进行上面的矩阵相乘,找到最近的那个 K-Nearest neighbor(KNN)

找出k个最相近的文章

K-Nearest neighbor(KNN)

找出k个最相近的文章 clustering

clustering

聚类属于一种无监督学习,输入的资料没有标签

import graphlab

people = graphlab.SFrame('people_wiki.sframe')

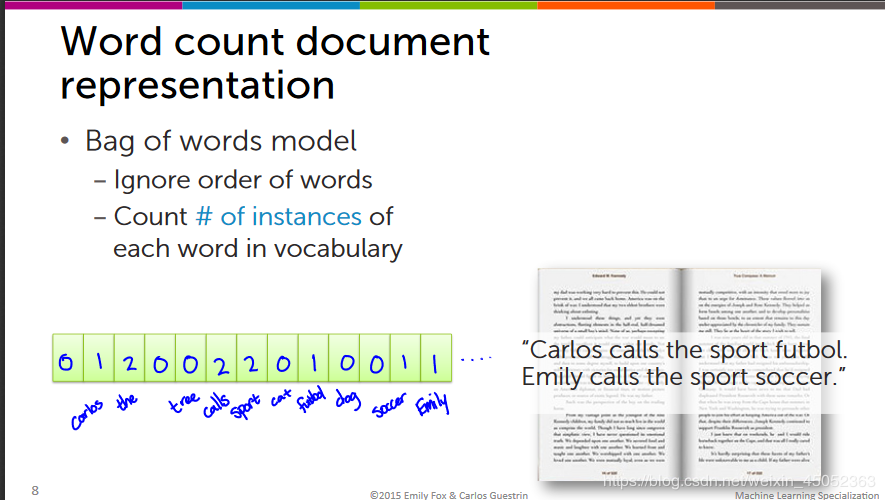

Get the word counts for Obama article

obama['word_count'] = graphlab.text_analytics.count_words(obama['text']

Sort the word counts for the obama article

obama_word_count_table = obama[['word_count']].stack('word_count', new_column_name=['word','count'])

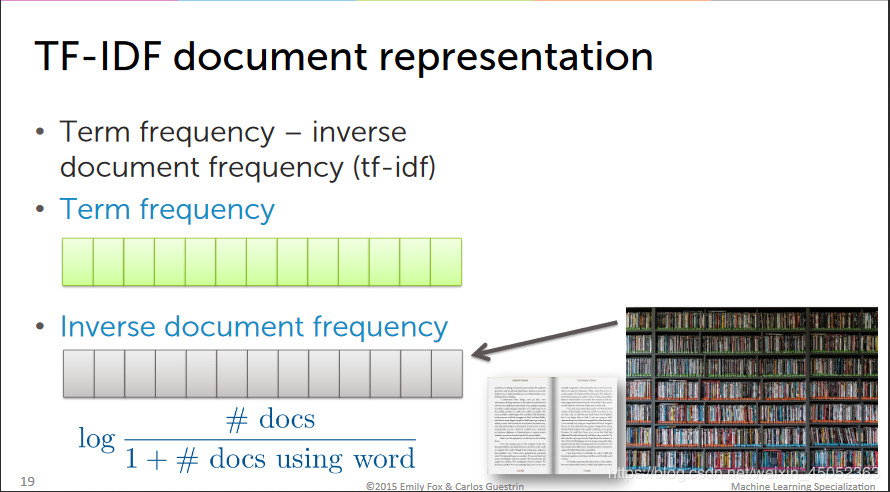

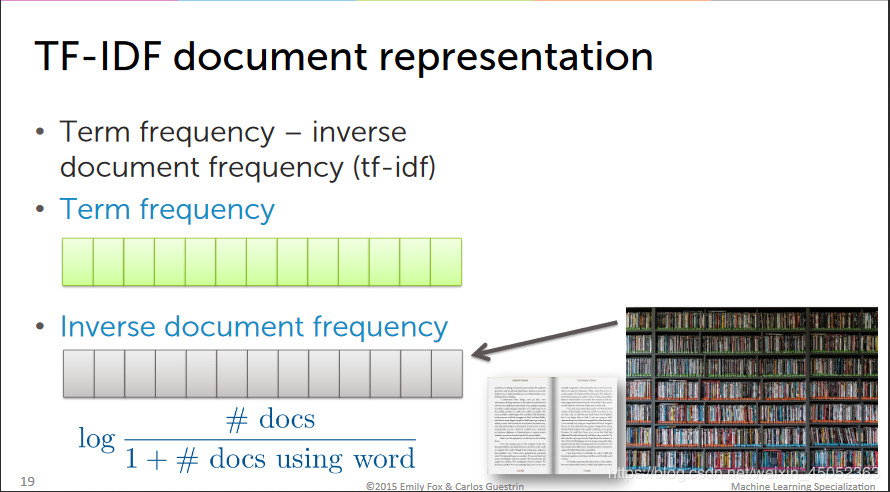

Compute TF-IDF for the corpus

people['word_count'] = graphlab.text_analytics.count_words(people['text'])#先将文进行分析

tfidf = graphlab.text_analytics.tf_idf(people['word_count'])#使用tf_idf直接求得我们的目标

Examine the TF-IDF for the Obama article

obama = people[people['name']=='Barack Obama']#先选出obama的数据

obama[['tfidf']].stack('tfidf',new_column_name=['word','tfidf']).sort('tfidf',ascending=False)#再进行tfidf计算及排序

Is Obama closer to clinton or beckham

graphlab.distances.cosine(obama['tfidf'][0],clinton['tfidf'][0])# 计算余弦距离

graphlab.distances.cosine(obama['tfidf'][0],beckham['tfidf'][0])

Build a nearest neighbor model for ducument retrival

knn_model = graphlab.nearest_neighbors.create(people,features=['tfidf'],label='name')#knn模型创建

直接用query方法直接调用knn_model

knn_model.query(beckham)

作者:weixin_45052363

相关文章

Quirita

2021-04-07

Vanna

2021-01-09

Iris

2021-08-03

Letitia

2021-02-12

Ailis

2020-05-08

Gella

2023-07-20

Kirima

2023-07-20

Grizelda

2023-07-20

Janna

2023-07-20

Fawn

2023-07-21

Ophelia

2023-07-21

Crystal

2023-07-21

Laila

2023-07-21

Aine

2023-07-21

Bliss

2023-07-21

Lillian

2023-07-21

Tertia

2023-07-21

Olive

2023-07-21