scrapy创建以及启动项目步骤!

1,创建项目:scrapy startproject biqukanproject

D:\pythonscrapy>scrapy startproject biqukanproject

New Scrapy project 'biqukanproject', using template directory 'd:\python_install\lib\site-packages\scrapy\templates\project', created in:

D:\pythonscrapy\biqukanproject

You can start your first spider with:

cd biqukanproject

scrapy genspider example example.com

2,进入被创建项目,再创建爬虫!

D:\pythonscrapy>cd biqukanproject

D:\pythonscrapy\biqukanproject>

3,创建爬虫:scrapy genspider biqukanspider biqukan.com

D:\pythonscrapy\biqukanproject>scrapy genspider biqukanspider biqukan.com

Created spider 'biqukanspider' using template 'basic' in module:

biqukanproject.spiders.biqukanspider

4,启动:

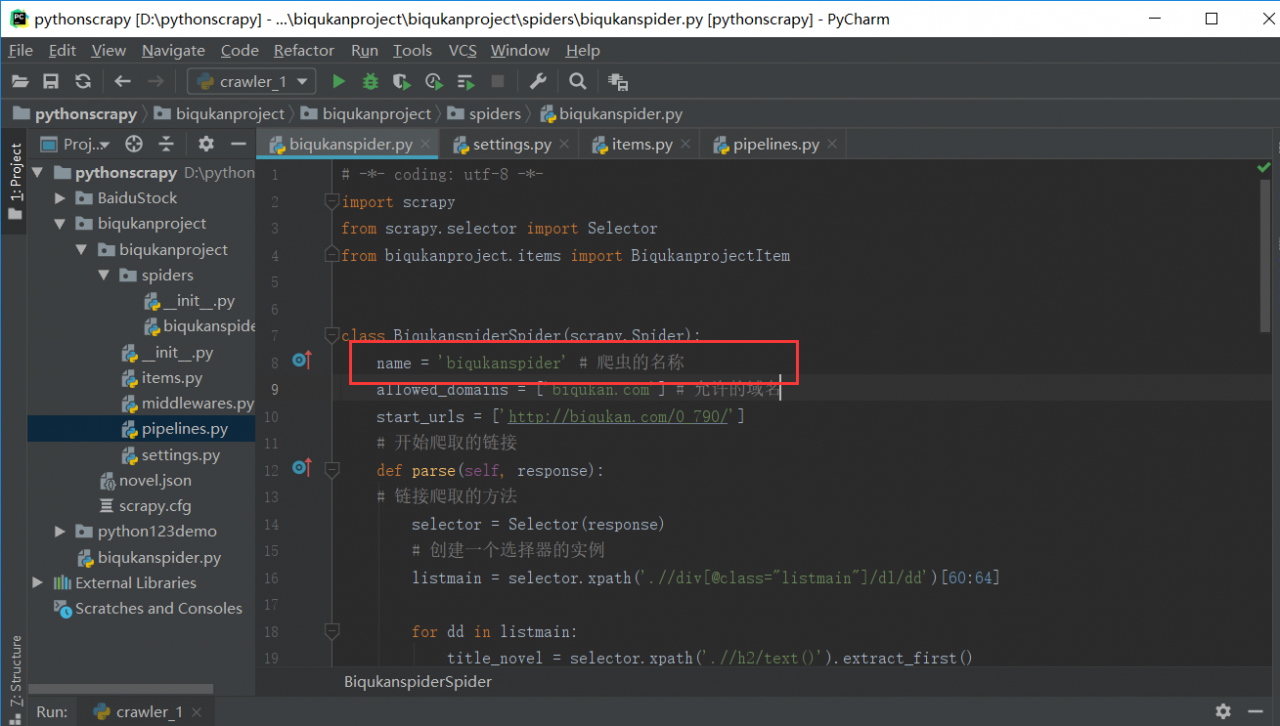

启动名字是上面图里面圈起来的!

命令: scrapy crawl biqukanspider

结果:

D:\pythonscrapy\biqukanproject>scrapy crawl biqukanspider

2020-02-28 14:36:39 [scrapy.utils.log] INFO: Scrapy 1.8.0 started (bot: biqukanproject)

2020-02-28 14:36:39 [scrapy.utils.log] INFO: Versions: lxml 4.5.0.0, libxml2 2.9.5, cssselect 1.1.0, parsel 1.5.2, w3lib 1.21.0, Twisted 19.10.0, Python 3.8.1 (tags/v3.8.1:1b293b6, Dec 18 2019, 22:39:24) [MSC v.1916 32 bit (Intel)], pyOpenSSL 19.1.0 (OpenSSL 1.1.1d 10 Sep 2019), cryptography 2.8, Platform Windows-10-10.0.17134-SP0

2020-02-28 14:36:39 [scrapy.crawler] INFO: Overridden settings: {'BOT_NAME': 'biqukanproject', 'NEWSPIDER_MODULE': 'biqukanproject.spiders', 'ROBOTSTXT_OBEY': True, 'SPIDER_MODULES': ['biqukanproject.spiders'], 'USER_AGENT': 'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/65.0.3325.162 Safari/537.36'}

2020-02-28 14:36:39 [scrapy.extensions.telnet] INFO: Telnet Password: 1de4a9895047eee8

2020-02-28 14:36:40 [scrapy.middleware] INFO: Enabled extensions:

['scrapy.extensions.corestats.CoreStats',

'scrapy.extensions.telnet.TelnetConsole',

'scrapy.extensions.logstats.LogStats']

2020-02-28 14:36:41 [scrapy.middleware] INFO: Enabled downloader middlewares:

['scrapy.downloadermiddlewares.robotstxt.RobotsTxtMiddleware',

'scrapy.downloadermiddlewares.httpauth.HttpAuthMiddleware',

'scrapy.downloadermiddlewares.downloadtimeout.DownloadTimeoutMiddleware',

'scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware',

'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware',

'scrapy.downloadermiddlewares.retry.RetryMiddleware',

'scrapy.downloadermiddlewares.redirect.MetaRefreshMiddleware',

'scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware',

'scrapy.downloadermiddlewares.redirect.RedirectMiddleware',

'scrapy.downloadermiddlewares.cookies.CookiesMiddleware',

'scrapy.downloadermiddlewares.httpproxy.HttpProxyMiddleware',

'scrapy.downloadermiddlewares.stats.DownloaderStats']

2020-02-28 14:36:41 [scrapy.middleware] INFO: Enabled spider middlewares:

['scrapy.spidermiddlewares.httperror.HttpErrorMiddleware',

'scrapy.spidermiddlewares.offsite.OffsiteMiddleware',

'scrapy.spidermiddlewares.referer.RefererMiddleware',

'scrapy.spidermiddlewares.urllength.UrlLengthMiddleware',

'scrapy.spidermiddlewares.depth.DepthMiddleware']

2020-02-28 14:36:41 [scrapy.middleware] INFO: Enabled item pipelines:

['biqukanproject.pipelines.BiqukanprojectPipeline']

2020-02-28 14:36:41 [scrapy.core.engine] INFO: Spider opened

2020-02-28 14:36:41 [scrapy.extensions.logstats] INFO: Crawled 0 pages (at 0 pages/min), scraped 0 items (at 0 items/min)

2020-02-28 14:36:41 [scrapy.extensions.telnet] INFO: Telnet console listening on 127.0.0.1:6023

2020-02-28 14:36:42 [scrapy.downloadermiddlewares.retry] DEBUG: Retrying (failed 1 times): []

2020-02-28 14:36:42 [scrapy.downloadermiddlewares.retry] DEBUG: Retrying (failed 2 times): []

2020-02-28 14:36:42 [scrapy.downloadermiddlewares.retry] DEBUG: Gave up retrying (failed 3 times): []

2020-02-28 14:36:42 [scrapy.downloadermiddlewares.robotstxt] ERROR: Error downloading : []

Traceback (most recent call last):

File "d:\python_install\lib\site-packages\scrapy\core\downloader\middleware.py", line 44, in process_request

defer.returnValue((yield download_func(request=request, spider=spider)))

twisted.web._newclient.ResponseNeverReceived: []

2020-02-28 14:36:43 [scrapy.downloadermiddlewares.retry] DEBUG: Retrying (failed 1 times): []

2020-02-28 14:36:46 [scrapy.downloadermiddlewares.retry] DEBUG: Retrying (failed 2 times): []

2020-02-28 14:36:52 [scrapy.downloadermiddlewares.retry] DEBUG: Gave up retrying (failed 3 times): []

2020-02-28 14:36:52 [scrapy.core.scraper] ERROR: Error downloading

Traceback (most recent call last):

File "d:\python_install\lib\site-packages\scrapy\core\downloader\middleware.py", line 44, in process_request

defer.returnValue((yield download_func(request=request, spider=spider)))

twisted.web._newclient.ResponseNeverReceived: []

2020-02-28 14:36:52 [scrapy.core.engine] INFO: Closing spider (finished)

2020-02-28 14:36:52 [scrapy.statscollectors] INFO: Dumping Scrapy stats:

{'downloader/exception_count': 6,

'downloader/exception_type_count/twisted.web._newclient.ResponseNeverReceived': 6,

'downloader/request_bytes': 1758,

'downloader/request_count': 6,

'downloader/request_method_count/GET': 6,

'elapsed_time_seconds': 10.784783,

'finish_reason': 'finished',

'finish_time': datetime.datetime(2020, 2, 28, 6, 36, 52, 625867),

'log_count/DEBUG': 6,

'log_count/ERROR': 2,

'log_count/INFO': 10,

'retry/count': 4,

'retry/max_reached': 2,

'retry/reason_count/twisted.web._newclient.ResponseNeverReceived': 4,

"robotstxt/exception_count/": 1,

'robotstxt/request_count': 1,

'scheduler/dequeued': 3,

'scheduler/dequeued/memory': 3,

'scheduler/enqueued': 3,

'scheduler/enqueued/memory': 3,

'start_time': datetime.datetime(2020, 2, 28, 6, 36, 41, 841084)}

2020-02-28 14:36:52 [scrapy.core.engine] INFO: Spider closed (finished)

D:\pythonscrapy\biqukanproject>

作者:dream_uping