PyTorch——解决异或问题XOR

试设计一个前馈神经网络来解决XOR问题

作者:我是大黄同学呀

该前馈神经网络具有两个隐藏神经元和一个输出神经元

github地址[https://github.com/Classmate-Huang/nnFramework/blob/master/EasyPytorch/XOR.py]

代码# 利用Pytorch解决XOR问题

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

import numpy as np

data = np.array([[1, 0, 1], [0, 1, 1],

[1, 1, 0], [0, 0, 0]], dtype='float32')

x = data[:, :2]

y = data[:, 2]

# 初始化权重变量

def weight_init_normal(m):

classname = m.__class__.__name__

if classname.find('Linear') != -1:

m.weight.data.normal_(0.0, 1.)

m.bias.data.fill_(0.)

class XOR(nn.Module):

def __init__(self):

super(XOR, self).__init__()

self.fc1 = nn.Linear(2, 2) # 一个隐藏层 2个神经元

self.fc2 = nn.Linear(2, 1) # 输出层 1个神经元

def forward(self, x):

h1 = F.sigmoid(self.fc1(x)) # 之前也尝试过用ReLU作为激活函数, 太容易死亡ReLU了.

h2 = F.sigmoid(self.fc2(h1))

return h2

net = XOR()

net.apply(weight_init_normal)

x = torch.Tensor(x.reshape(-1, 2))

y = torch.Tensor(y.reshape(-1, 1))

# 定义loss function

criterion = nn.BCELoss() # MSE

# 定义优化器

optimizer = optim.SGD(net.parameters(), lr=0.1, momentum=0.9) # SGD

# 训练

for epoch in range(1000):

optimizer.zero_grad() # 清零梯度缓存区

out = net(x)

loss = criterion(out, y)

print(loss)

loss.backward()

optimizer.step() # 更新

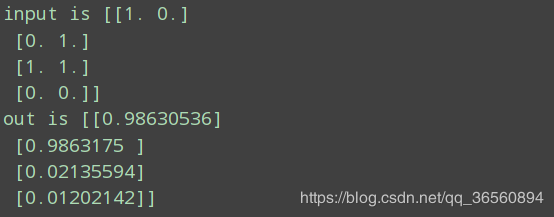

# 测试

test = net(x)

print("input is {}".format(x.detach().numpy()))

print('out is {}'.format(test.detach().numpy()))

效果

作者:我是大黄同学呀