python多线程爬取西刺代理的示例代码

西刺代理是一个国内IP代理,由于代理倒闭了,所以我就把原来的代码放出来供大家学习吧。

镜像地址:https://www.blib.cn/url/xcdl.html

首先找到所有的tr标签,与class="odd"的标签,然后提取出来。

然后再依次找到tr标签里面的所有td标签,然后只提取出里面的[1,2,5,9]这四个标签的位置,其他的不提取。

最后可以写出提取单一页面的代码,提取后将其保存到文件中。

import sys,re,threading

import requests,lxml

from queue import Queue

import argparse

from bs4 import BeautifulSoup

head = {"User-Agent": "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/80.0.3987.100 Safari/537.36"}

if __name__ == "__main__":

ip_list=[]

fp = open("SpiderAddr.json","a+",encoding="utf-8")

url = "https://www.blib.cn/url/xcdl.html"

request = requests.get(url=url,headers=head)

soup = BeautifulSoup(request.content,"lxml")

data = soup.find_all(name="tr",attrs={"class": re.compile("|[^odd]")})

for item in data:

soup_proxy = BeautifulSoup(str(item),"lxml")

proxy_list = soup_proxy.find_all(name="td")

for i in [1,2,5,9]:

ip_list.append(proxy_list[i].string)

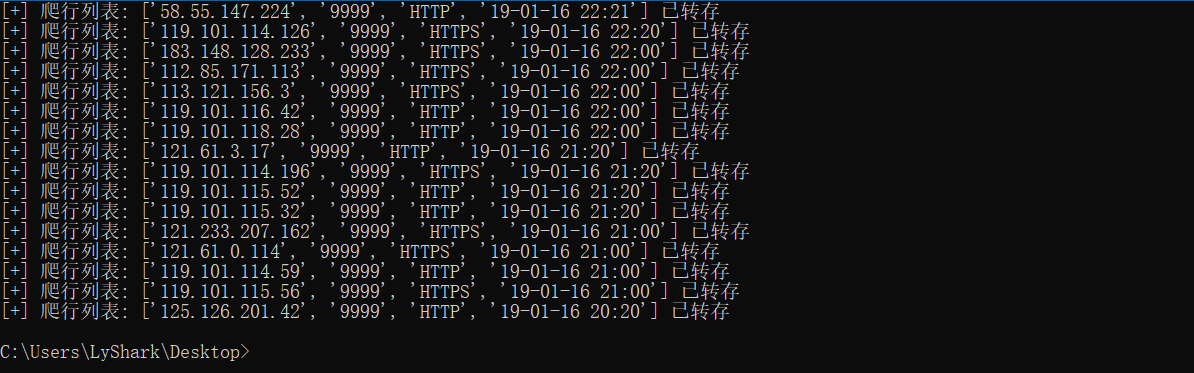

print("[+] 爬行列表: {} 已转存".format(ip_list))

fp.write(str(ip_list) + '\n')

ip_list.clear()

爬取后会将文件保存为 SpiderAddr.json 格式。

最后再使用另一段代码,将其转换为一个SSR代理工具直接能识别的格式,{'http': 'http://119.101.112.31:9999'}

import sys,re,threading

import requests,lxml

from queue import Queue

import argparse

from bs4 import BeautifulSoup

if __name__ == "__main__":

result = []

fp = open("SpiderAddr.json","r")

data = fp.readlines()

for item in data:

dic = {}

read_line = eval(item.replace("\n",""))

Protocol = read_line[2].lower()

if Protocol == "http":

dic[Protocol] = "http://" + read_line[0] + ":" + read_line[1]

else:

dic[Protocol] = "https://" + read_line[0] + ":" + read_line[1]

result.append(dic)

print(result)

完整多线程版代码如下所示。

import sys,re,threading

import requests,lxml

from queue import Queue

import argparse

from bs4 import BeautifulSoup

head = {"User-Agent": "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/80.0.3987.100 Safari/537.36"}

class AgentSpider(threading.Thread):

def __init__(self,queue):

threading.Thread.__init__(self)

self._queue = queue

def run(self):

ip_list=[]

fp = open("SpiderAddr.json","a+",encoding="utf-8")

while not self._queue.empty():

url = self._queue.get()

try:

request = requests.get(url=url,headers=head)

soup = BeautifulSoup(request.content,"lxml")

data = soup.find_all(name="tr",attrs={"class": re.compile("|[^odd]")})

for item in data:

soup_proxy = BeautifulSoup(str(item),"lxml")

proxy_list = soup_proxy.find_all(name="td")

for i in [1,2,5,9]:

ip_list.append(proxy_list[i].string)

print("[+] 爬行列表: {} 已转存".format(ip_list))

fp.write(str(ip_list) + '\n')

ip_list.clear()

except Exception:

pass

def StartThread(count):

queue = Queue()

threads = []

for item in range(1,int(count)+1):

url = "https://www.xicidaili.com/nn/{}".format(item)

queue.put(url)

print("[+] 生成爬行链接 {}".format(url))

for item in range(count):

threads.append(AgentSpider(queue))

for t in threads:

t.start()

for t in threads:

t.join()

# 转换函数

def ConversionAgentIP(FileName):

result = []

fp = open(FileName,"r")

data = fp.readlines()

for item in data:

dic = {}

read_line = eval(item.replace("\n",""))

Protocol = read_line[2].lower()

if Protocol == "http":

dic[Protocol] = "http://" + read_line[0] + ":" + read_line[1]

else:

dic[Protocol] = "https://" + read_line[0] + ":" + read_line[1]

result.append(dic)

return result

if __name__ == "__main__":

parser = argparse.ArgumentParser()

parser.add_argument("-p","--page",dest="page",help="指定爬行多少页")

parser.add_argument("-f","--file",dest="file",help="将爬取到的结果转化为代理格式 SpiderAddr.json")

args = parser.parse_args()

if args.page:

StartThread(int(args.page))

elif args.file:

dic = ConversionAgentIP(args.file)

for item in dic:

print(item)

else:

parser.print_help()

以上就是python多线程爬取西刺代理的示例代码的详细内容,更多关于python多线程爬取代理的资料请关注软件开发网其它相关文章!

您可能感兴趣的文章:Python基础进阶之海量表情包多线程爬虫功能的实现python3爬虫中多线程进行解锁操作实例Python如何使用队列方式实现多线程爬虫python爬虫开发之使用Python爬虫库requests多线程抓取猫眼电影TOP100实例python支持多线程的爬虫实例python爬虫中多线程的使用详解python面向对象多线程爬虫爬取搜狐页面的实例代码python爬虫爬取快手视频多线程下载功能Python之多线程爬虫抓取网页图片的示例代码Python3多线程爬虫实例讲解代码php与python实现的线程池多线程爬虫功能示例Python 爬虫多线程详解及实例代码