大数据技术之Flume

大数据技术之Flume

第 1 章 Flume 概述

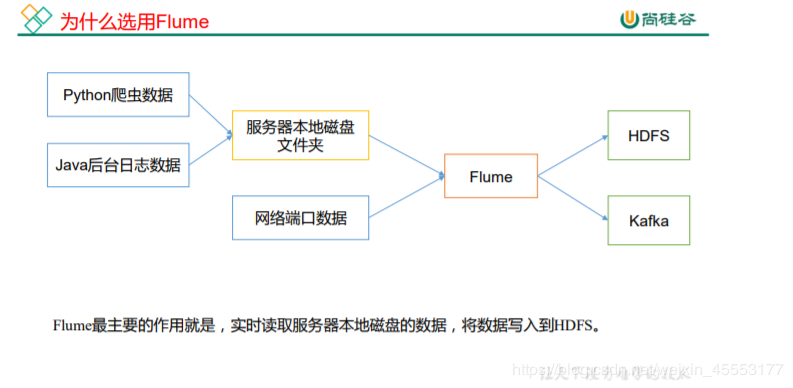

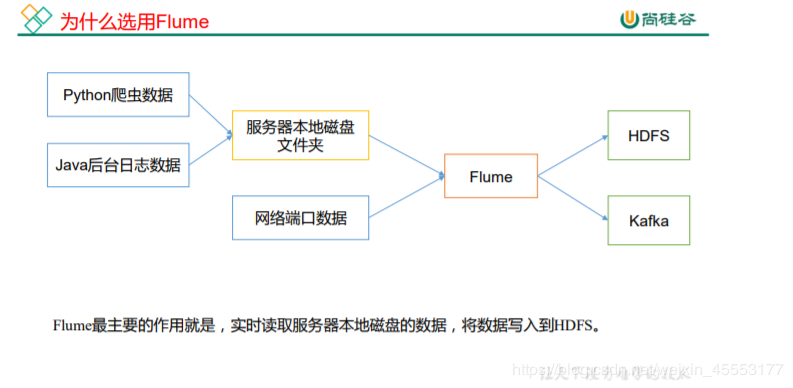

1.1 Flume 定义

Flume 是 Cloudera 提供的一个高可用的,高可靠的,分布式的海量日志采集、聚合和传

输的系统。Flume 基于流式架构,灵活简单。

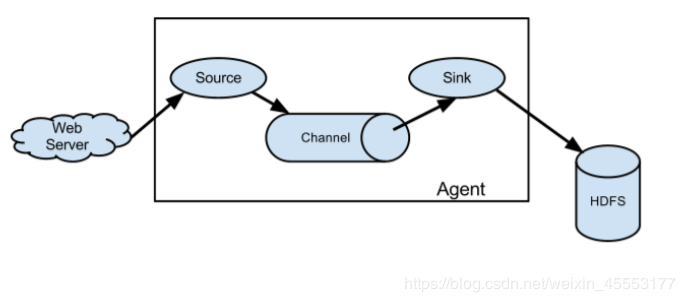

1.2 Flume 基础架构

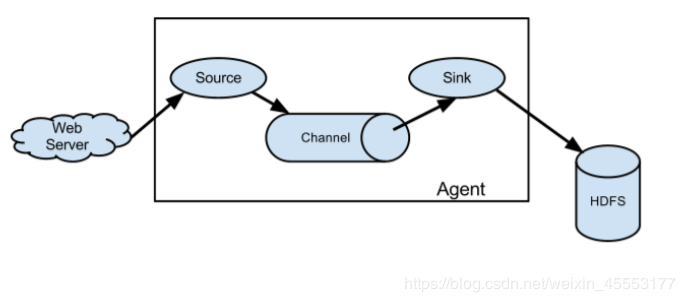

Flume 组成架构如图所示:

1.2 Flume 基础架构

Flume 组成架构如图所示:

下面我们来详细介绍一下 Flume 架构中的组件:

1.2.1 Agent

Agent 是一个 JVM 进程,它以事件的形式将数据从源头送至目的。

Agent 主要有 3 个部分组成,Source、Channel、Sink。

1.2.2 Source

Source 是负责接收数据到 Flume Agent 的组件。Source 组件可以处理各种类型、各种

格式的日志数据,包括 avro、thrift、exec、jms、spooling directory、netcat、sequence

generator、syslog、http、legacy。

1.2.3 Sink

Sink 不断地轮询 Channel 中的事件且批量地移除它们,并将这些事件批量写入到存储

或索引系统、或者被发送到另一个 Flume Agent。

Sink 组件目的地包括 hdfs、logger、avro、thrift、ipc、file、HBase、solr、自定

义。

1.2.4 Channel

Channel 是位于 Source 和 Sink 之间的缓冲区。因此,Channel 允许 Source 和 Sink 运

作在不同的速率上。Channel 是线程安全的,可以同时处理几个 Source 的写入操作和几个

Sink 的读取操作。

Flume 自带两种 Channel:Memory Channel 和 File Channel 以及 Kafka Channel。

Memory Channel 是内存中的队列。Memory Channel 在不需要关心数据丢失的情景下适

用。如果需要关心数据丢失,那么 Memory Channel 就不应该使用,因为程序死亡、机器宕

机或者重启都会导致数据丢失。

File Channel 将所有事件写到磁盘。因此在程序关闭或机器宕机的情况下不会丢失数

据。

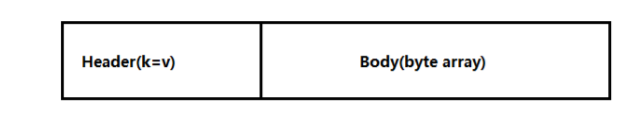

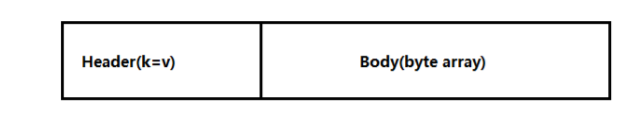

1.2.5 Event

传输单元,Flume 数据传输的基本单元,以 Event 的形式将数据从源头送至目的地。

Event 由 Header 和 Body 两部分组成,Header 用来存放该 event 的一些属性,为 K-V 结构,

Body 用来存放该条数据,形式为字节数组。

下面我们来详细介绍一下 Flume 架构中的组件:

1.2.1 Agent

Agent 是一个 JVM 进程,它以事件的形式将数据从源头送至目的。

Agent 主要有 3 个部分组成,Source、Channel、Sink。

1.2.2 Source

Source 是负责接收数据到 Flume Agent 的组件。Source 组件可以处理各种类型、各种

格式的日志数据,包括 avro、thrift、exec、jms、spooling directory、netcat、sequence

generator、syslog、http、legacy。

1.2.3 Sink

Sink 不断地轮询 Channel 中的事件且批量地移除它们,并将这些事件批量写入到存储

或索引系统、或者被发送到另一个 Flume Agent。

Sink 组件目的地包括 hdfs、logger、avro、thrift、ipc、file、HBase、solr、自定

义。

1.2.4 Channel

Channel 是位于 Source 和 Sink 之间的缓冲区。因此,Channel 允许 Source 和 Sink 运

作在不同的速率上。Channel 是线程安全的,可以同时处理几个 Source 的写入操作和几个

Sink 的读取操作。

Flume 自带两种 Channel:Memory Channel 和 File Channel 以及 Kafka Channel。

Memory Channel 是内存中的队列。Memory Channel 在不需要关心数据丢失的情景下适

用。如果需要关心数据丢失,那么 Memory Channel 就不应该使用,因为程序死亡、机器宕

机或者重启都会导致数据丢失。

File Channel 将所有事件写到磁盘。因此在程序关闭或机器宕机的情况下不会丢失数

据。

1.2.5 Event

传输单元,Flume 数据传输的基本单元,以 Event 的形式将数据从源头送至目的地。

Event 由 Header 和 Body 两部分组成,Header 用来存放该 event 的一些属性,为 K-V 结构,

Body 用来存放该条数据,形式为字节数组。

2.1 Flume 安装部署

2.1.1 安装地址

1) Flume 官网地址

http://flume.apache.org/

2)文档查看地址

http://flume.apache.org/FlumeUserGuide.html

3)下载地址

http://archive.apache.org/dist/flume/

2.1.2 安装部署

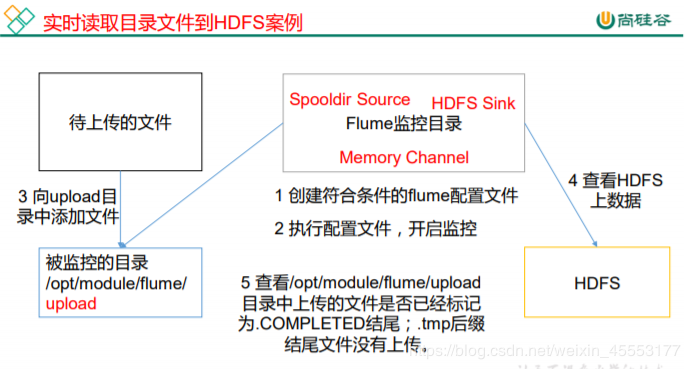

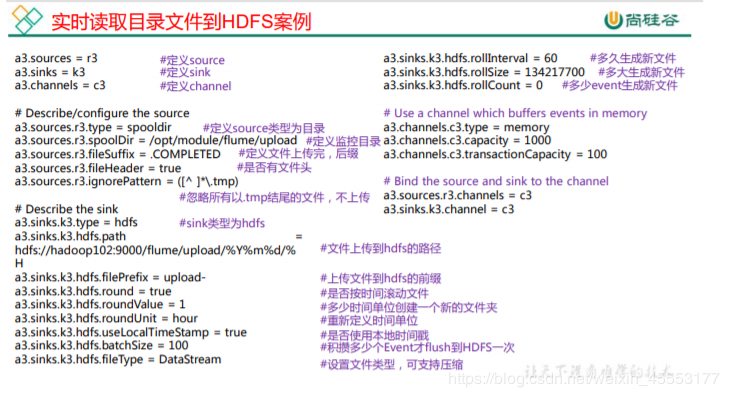

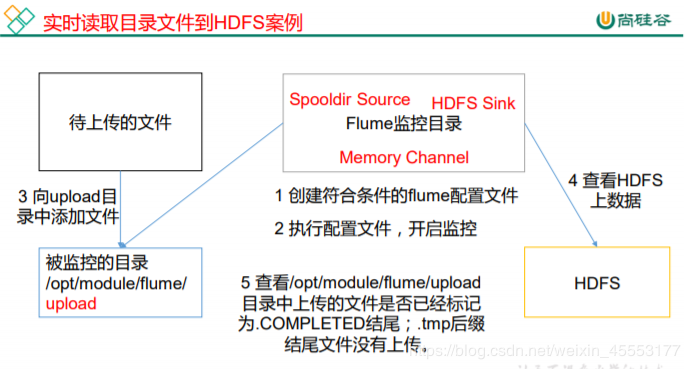

2.2.1 实时监控目录下多个新文件(从本地上传到HDFS)

1)案例需求:使用 Flume 监听整个目录的文件,并上传至 HDFS

2)需求分析:

2.1 Flume 安装部署

2.1.1 安装地址

1) Flume 官网地址

http://flume.apache.org/

2)文档查看地址

http://flume.apache.org/FlumeUserGuide.html

3)下载地址

http://archive.apache.org/dist/flume/

2.1.2 安装部署

2.2.1 实时监控目录下多个新文件(从本地上传到HDFS)

1)案例需求:使用 Flume 监听整个目录的文件,并上传至 HDFS

2)需求分析:

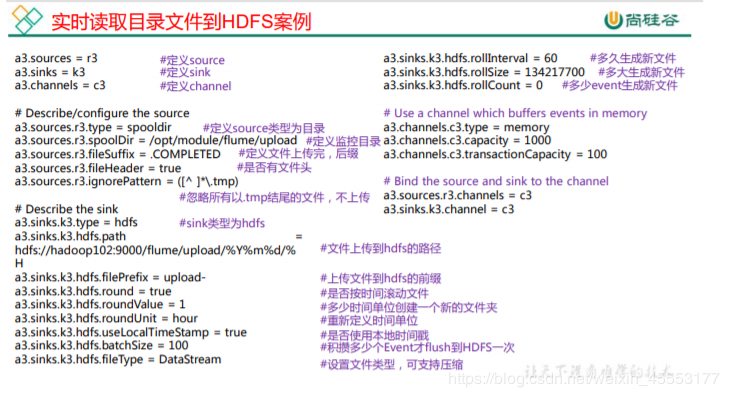

实现步骤:

1.创建配置文件 flume-dir-hdfs.conf

实现步骤:

1.创建配置文件 flume-dir-hdfs.conf

2.修改配置文件 flume-env.sh

2.修改配置文件 flume-env.sh

作者:可爱的杨一凡

作者:可爱的杨一凡

1.2 Flume 基础架构

Flume 组成架构如图所示:

1.2 Flume 基础架构

Flume 组成架构如图所示:

下面我们来详细介绍一下 Flume 架构中的组件:

1.2.1 Agent

Agent 是一个 JVM 进程,它以事件的形式将数据从源头送至目的。

Agent 主要有 3 个部分组成,Source、Channel、Sink。

1.2.2 Source

Source 是负责接收数据到 Flume Agent 的组件。Source 组件可以处理各种类型、各种

格式的日志数据,包括 avro、thrift、exec、jms、spooling directory、netcat、sequence

generator、syslog、http、legacy。

1.2.3 Sink

Sink 不断地轮询 Channel 中的事件且批量地移除它们,并将这些事件批量写入到存储

或索引系统、或者被发送到另一个 Flume Agent。

Sink 组件目的地包括 hdfs、logger、avro、thrift、ipc、file、HBase、solr、自定

义。

1.2.4 Channel

Channel 是位于 Source 和 Sink 之间的缓冲区。因此,Channel 允许 Source 和 Sink 运

作在不同的速率上。Channel 是线程安全的,可以同时处理几个 Source 的写入操作和几个

Sink 的读取操作。

Flume 自带两种 Channel:Memory Channel 和 File Channel 以及 Kafka Channel。

Memory Channel 是内存中的队列。Memory Channel 在不需要关心数据丢失的情景下适

用。如果需要关心数据丢失,那么 Memory Channel 就不应该使用,因为程序死亡、机器宕

机或者重启都会导致数据丢失。

File Channel 将所有事件写到磁盘。因此在程序关闭或机器宕机的情况下不会丢失数

据。

1.2.5 Event

传输单元,Flume 数据传输的基本单元,以 Event 的形式将数据从源头送至目的地。

Event 由 Header 和 Body 两部分组成,Header 用来存放该 event 的一些属性,为 K-V 结构,

Body 用来存放该条数据,形式为字节数组。

下面我们来详细介绍一下 Flume 架构中的组件:

1.2.1 Agent

Agent 是一个 JVM 进程,它以事件的形式将数据从源头送至目的。

Agent 主要有 3 个部分组成,Source、Channel、Sink。

1.2.2 Source

Source 是负责接收数据到 Flume Agent 的组件。Source 组件可以处理各种类型、各种

格式的日志数据,包括 avro、thrift、exec、jms、spooling directory、netcat、sequence

generator、syslog、http、legacy。

1.2.3 Sink

Sink 不断地轮询 Channel 中的事件且批量地移除它们,并将这些事件批量写入到存储

或索引系统、或者被发送到另一个 Flume Agent。

Sink 组件目的地包括 hdfs、logger、avro、thrift、ipc、file、HBase、solr、自定

义。

1.2.4 Channel

Channel 是位于 Source 和 Sink 之间的缓冲区。因此,Channel 允许 Source 和 Sink 运

作在不同的速率上。Channel 是线程安全的,可以同时处理几个 Source 的写入操作和几个

Sink 的读取操作。

Flume 自带两种 Channel:Memory Channel 和 File Channel 以及 Kafka Channel。

Memory Channel 是内存中的队列。Memory Channel 在不需要关心数据丢失的情景下适

用。如果需要关心数据丢失,那么 Memory Channel 就不应该使用,因为程序死亡、机器宕

机或者重启都会导致数据丢失。

File Channel 将所有事件写到磁盘。因此在程序关闭或机器宕机的情况下不会丢失数

据。

1.2.5 Event

传输单元,Flume 数据传输的基本单元,以 Event 的形式将数据从源头送至目的地。

Event 由 Header 和 Body 两部分组成,Header 用来存放该 event 的一些属性,为 K-V 结构,

Body 用来存放该条数据,形式为字节数组。

2.1 Flume 安装部署

2.1.1 安装地址

1) Flume 官网地址

http://flume.apache.org/

2)文档查看地址

http://flume.apache.org/FlumeUserGuide.html

3)下载地址

http://archive.apache.org/dist/flume/

2.1.2 安装部署

2.2.1 实时监控目录下多个新文件(从本地上传到HDFS)

1)案例需求:使用 Flume 监听整个目录的文件,并上传至 HDFS

2)需求分析:

2.1 Flume 安装部署

2.1.1 安装地址

1) Flume 官网地址

http://flume.apache.org/

2)文档查看地址

http://flume.apache.org/FlumeUserGuide.html

3)下载地址

http://archive.apache.org/dist/flume/

2.1.2 安装部署

2.2.1 实时监控目录下多个新文件(从本地上传到HDFS)

1)案例需求:使用 Flume 监听整个目录的文件,并上传至 HDFS

2)需求分析:

实现步骤:

1.创建配置文件 flume-dir-hdfs.conf

实现步骤:

1.创建配置文件 flume-dir-hdfs.conf

mkdir /usr/local/flume

cd /usr/local/flume

tar -zxvf apache-flume-1.8.0-bin.tar.gz

rm -rf apache-flume-1.8.0-bin.tar.gz

cd /usr/local/flume/apache-flume-1.8.0-bin/conf/

vim flume-dir-hdfs.conf

添加如下内容

a3.sources = r3

a3.sinks = k3

a3.channels = c3

# Describe/configure the source

a3.sources.r3.type = spooldir

a3.sources.r3.spoolDir = /opt/log

a3.sources.r3.fileSuffix = .COMPLETED

a3.sources.r3.fileHeader = true

#忽略所有以.tmp 结尾的文件,不上传

a3.sources.r3.ignorePattern = ([^ ]*\.tmp)

# Describe the sink

a3.sinks.k3.type = hdfs

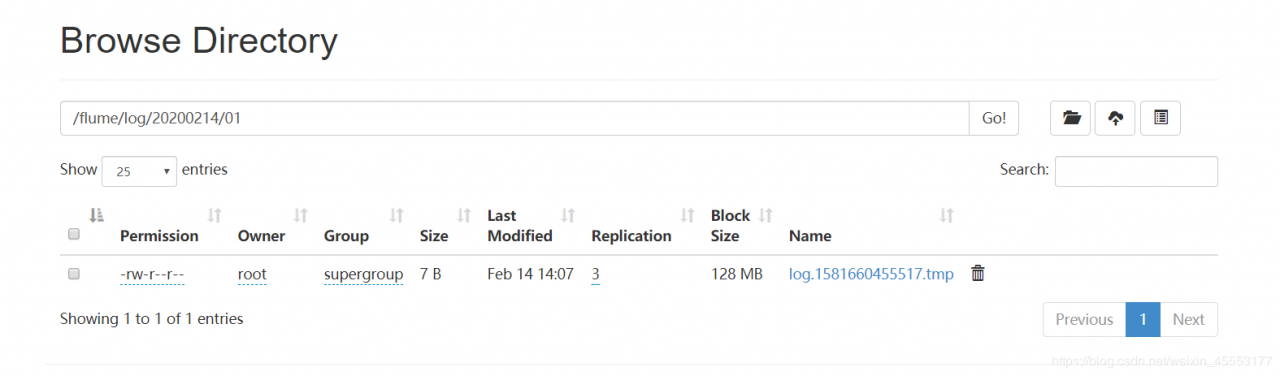

a3.sinks.k3.hdfs.path = hdfs://hadoop111:9000/flume/log/%Y%m%d/%H

#上传文件的前缀

a3.sinks.k3.hdfs.filePrefix = log

#是否按照时间滚动文件夹

a3.sinks.k3.hdfs.round = true

#多少时间单位创建一个新的文件夹

a3.sinks.k3.hdfs.roundValue = 1

#重新定义时间单位

a3.sinks.k3.hdfs.roundUnit = hour

#是否使用本地时间戳

a3.sinks.k3.hdfs.useLocalTimeStamp = true

#积攒多少个 Event 才 flush 到 HDFS 一次

a3.sinks.k3.hdfs.batchSize = 100

#设置文件类型,可支持压缩

a3.sinks.k3.hdfs.fileType = DataStream

#多久生成一个新的文件

a3.sinks.k3.hdfs.rollInterval = 60

#设置每个文件的滚动大小大概是 128M

a3.sinks.k3.hdfs.rollSize = 134217700

#文件的滚动与 Event 数量无关

a3.sinks.k3.hdfs.rollCount = 0

# Use a channel which buffers events in memory

a3.channels.c3.type = memory

a3.channels.c3.capacity = 1000

a3.channels.c3.transactionCapacity = 100

# Bind the source and sink to the channel

a3.sources.r3.channels = c3

a3.sinks.k3.channel = c3

2.修改配置文件 flume-env.sh

2.修改配置文件 flume-env.sh

cp flume-env.sh.template flume-env.sh

vim flume-env.sh

export JAVA_HOME=/usr/local/java/jdk1.8.0_211

3. 启动监控文件夹命令

./flume-ng agent -c ../conf/ -f ../conf/flume-dir-hdfs.conf -n a3 -Dflume.root.logger=INFO,console

说明:在使用 Spooling Directory Source 时

不要在监控目录中创建并持续修改文件

上传完成的文件会以.COMPLETED 结尾

被监控文件夹每 500 毫秒扫描一次文件变动

4. 向 lod 文件夹中添加文件

在/opt/log 目录下创建 b.txt 文件夹

mkdir b.txt

cp b.txt log/

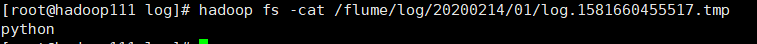

查看

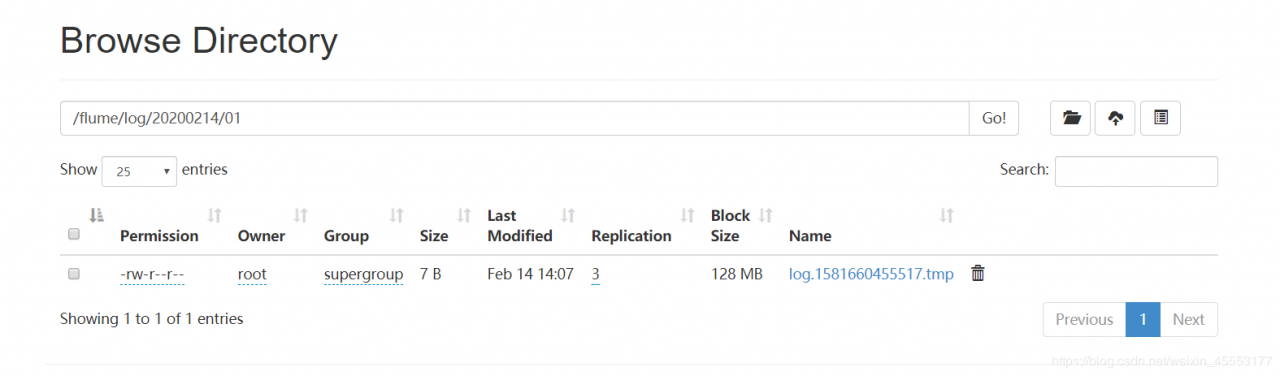

hadoop fs -cat /flume/log/20200214/01/log.1581660455517.tmp

作者:可爱的杨一凡

作者:可爱的杨一凡

相关文章

Claire

2021-03-29

Rachel

2023-07-20

Psyche

2023-07-20

Winola

2023-07-20

Gella

2023-07-20

Grizelda

2023-07-20

Janna

2023-07-20

Ophelia

2023-07-21

Crystal

2023-07-21

Laila

2023-07-21

Aine

2023-07-21

Bliss

2023-07-21

Lillian

2023-07-21

Tertia

2023-07-21

Olive

2023-07-21

Angie

2023-07-21

Nora

2023-07-24